|

This dataset contains records of marketing metrics, aggregated by day.

The second dataset is an online marketing metrics dataset. A market segment to which the opportunity belongs.A date, potentially when an opportunity was identified.The first dataset is a sales pipeline dataset, which contains a list of slightly more than 20,000 sales opportunity records for a fictitious business. Example datasets for the ETL workflowįor our example, we’ll use two publicly available Amazon QuickSight datasets. Each component performs a discrete function, or task, allowing you to scale and change applications quickly. You build applications from individual components. AWS Step Functions is a web service that enables you to coordinate the components of distributed applications and microservices using visual workflows. In this post, I show you how to use AWS Step Functions and AWS Lambda for orchestrating multiple ETL jobs involving a diverse set of technologies in an arbitrarily-complex ETL workflow. Also, tracing the overall ETL workflow’s execution status and handling error scenarios can become a challenge. But relying on CloudWatch Events alone means that there’s no single visual representation of the ETL workflow.

Amazon S3, the central data lake store, also supports CloudWatch Events. How can we orchestrate an ETL workflow that involves a diverse set of ETL technologies? AWS Glue, AWS DMS, Amazon EMR, and other services support Amazon CloudWatch Events, which we could use to chain ETL jobs together. The challenge of orchestrating an ETL workflow They include: AWS Database Migration Service (AWS DMS), Amazon EMR (using the Steps API), and even Amazon Athena.

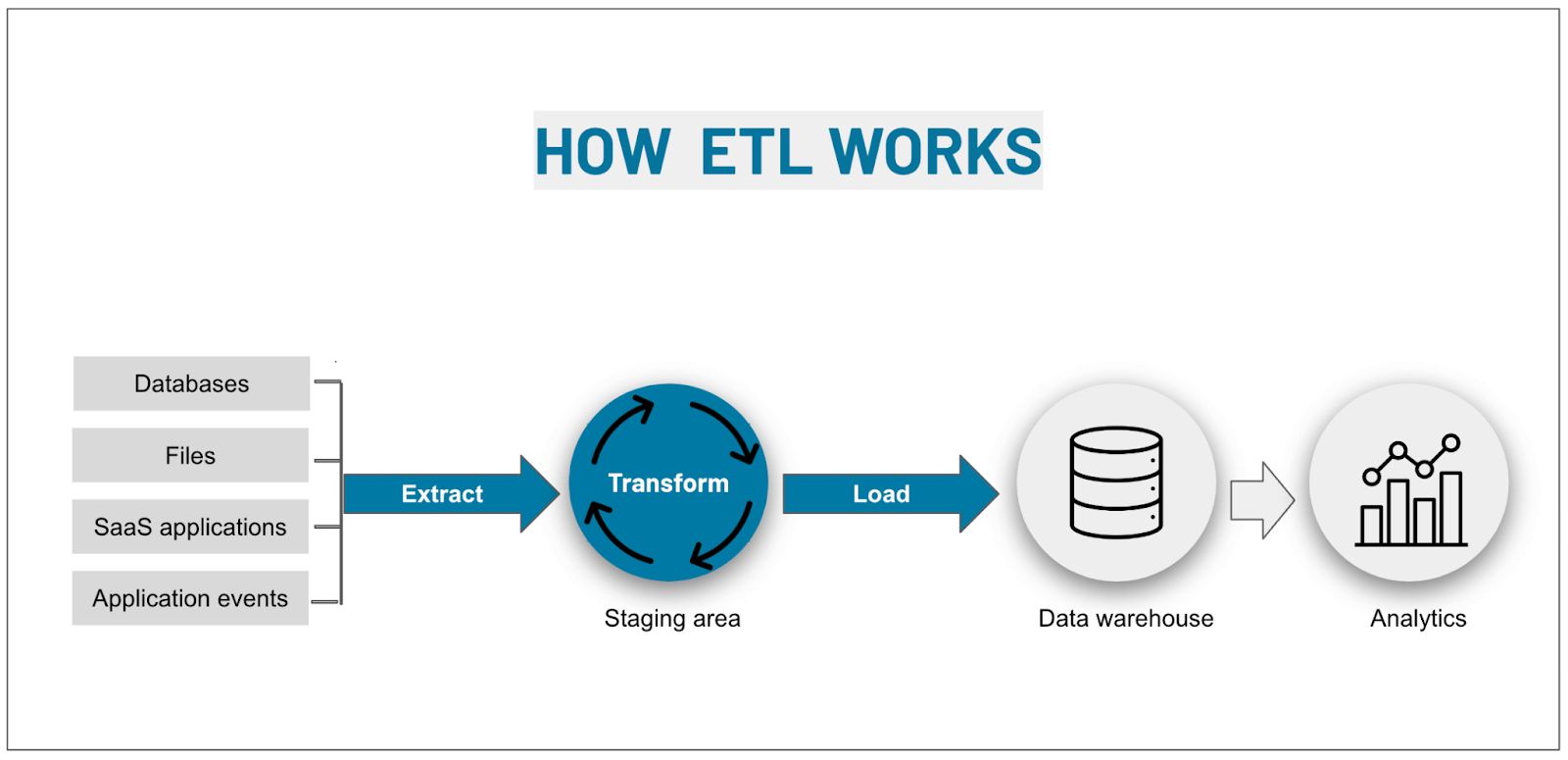

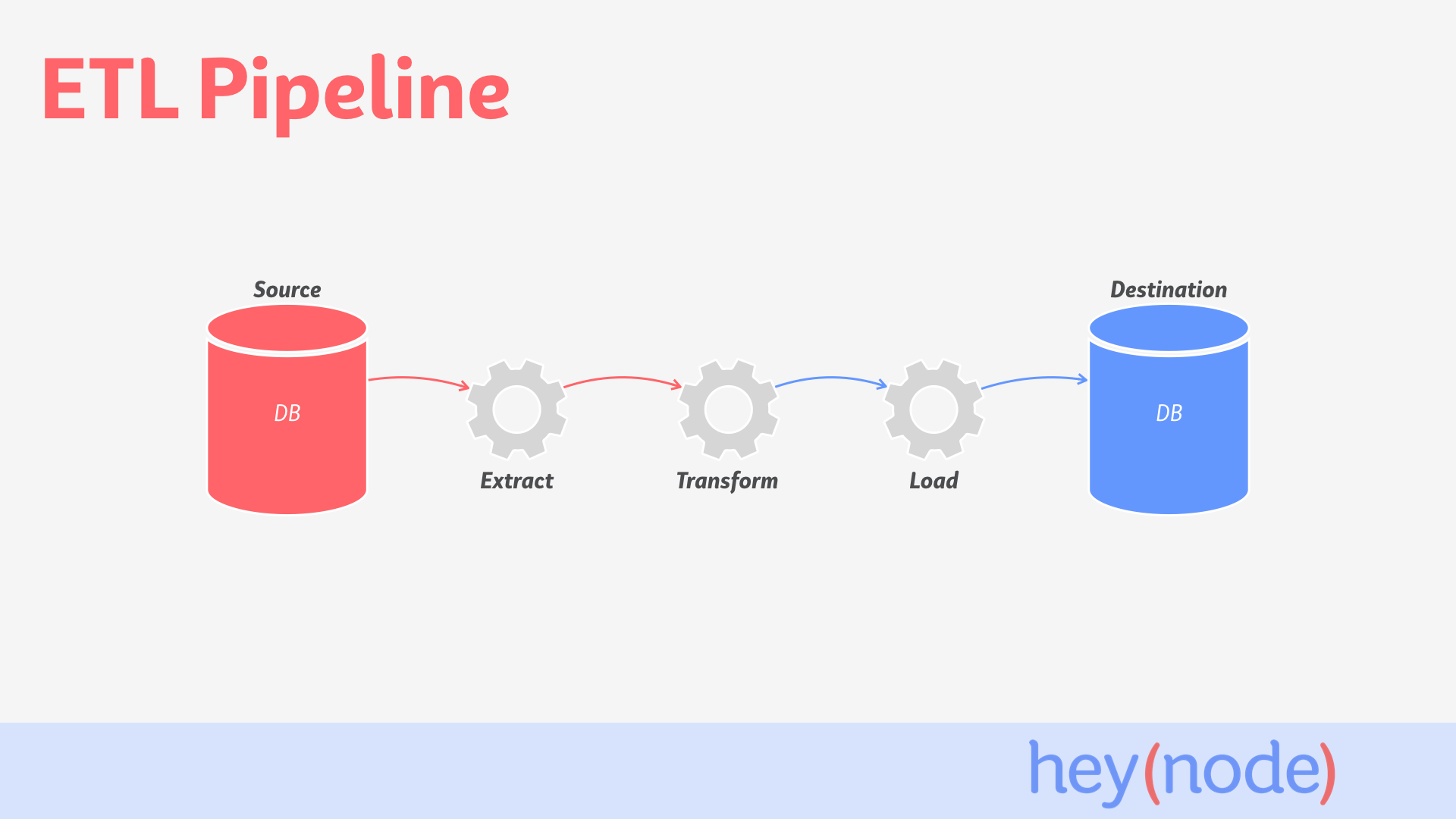

Other AWS Services also can be used to implement and manage ETL jobs. AWS Glue is a fully managed extract, transform, and load service that makes it easy for customers to prepare and load their data for analytics. Amazon S3 as a target is especially commonplace in the context of building a data lake in AWS.ĪWS offers AWS Glue, which is a service that helps author and deploy ETL jobs. The sources and targets of an ETL job could be relational databases in Amazon Relational Database Service (Amazon RDS) or on-premises, a data warehouse such as Amazon Redshift, or object storage such as Amazon Simple Storage Service (Amazon S3) buckets. An ETL job typically reads data from one or more data sources, applies various transformations to the data, and then writes the results to a target where data is ready for consumption. It transforms raw data into useful datasets and, ultimately, into actionable insight. Extract, transform, and load (ETL) operations collectively form the backbone of any modern enterprise data lake.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed